By MICHAEL GOLDMAN

By MICHAEL GOLDMAN

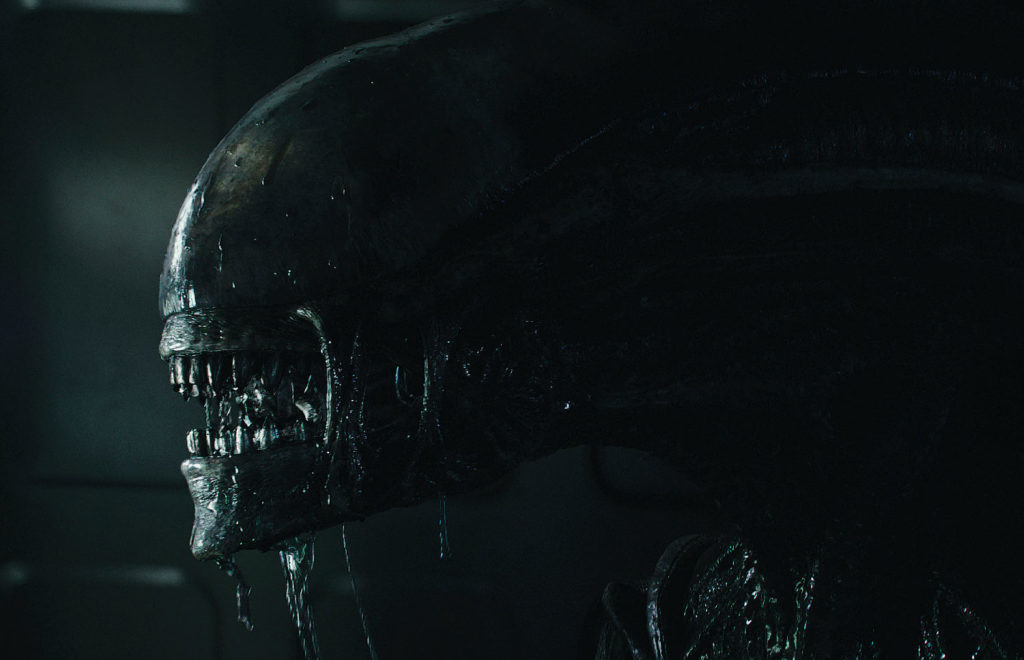

Alien: Covenant (Photo copyright © 2017 20th Century Fox. All Rights Reserved.)

Some of the huge VFX movies hitting big screens this summer feature iconic creatures we have seen before. The fact that Hollywood likes to revive stories and characters from books, comics, television and older movies is nothing new, but in the modern era, putting a new twist on characters or creatures of a fantastical nature, let alone ones with historical pedigrees, requires unbelievably complex visual effects work.

During summer 2017, among the familiar creatures returning prominently to the big screen are the famous talking apes in War for the Planet of the Apes, the third movie in the modern reboot of the Planet of the Apes franchise, featuring lead digital character Caesar.

We’re also getting a new imagining of The Mummy, with Sofia Boutella playing a malevolent Egyptian princess returned from the dead, but this time swathed not in ancient bandages as much as various CG augmentations. And then, Ridley Scott’s original Xenomorph Alien returns in Alien: Covenant, as murderous as ever, but with far more advanced technical support to bring the creature’s skin and muscles to life.

Alien: Covenant (Photo copyright © 2017 20th Century Fox. All Rights Reserved.)

“One of the advantages of digital characters is that you have the ability to improve them. … We have changed little details in Caesar’s face to help him better hit some of the intensity and subtlety that we see in [actor] Andy Serkis’s performance [during performance-capture sessions].”

—Dan Lemmon, Visual Effects Supervisor, War for the Planet of the Apes

Visual effects supervisors involved with reinventing those CG creatures are faced with many challenges. Also instructive is the fact that these challenges aren’t related to cinema alone – broadcast television is also having a similar visual effects renaissance for iconic creatures and characters. Agents of S.H.I.E.L.D. Visual Effects Supervisor Mark Kolpack talks about that show’s success this past season resurrecting the classic comic-book character known as Ghost Rider.

War for the Planet of the Apes (Photo copyright © 2017 20th Century Fox. All Rights Reserved.)

APES EVOLVE

WETA Digital’s Dan Lemmon served as Visual Effects Supervisor on War for the Planet of the Apes in partnership with Joe Letteri, Senior Visual Effects Supervisor. Lemmon points out that the original 1968 film, Planet of the Apes, which used human actors in ape costumes and extensive prosthetics, though looking modest by today’s standards, in fact earned legendary makeup designer John Chambers an honorary Academy Award for the film’s prosthetic makeup in an era before the Academy even offered a separate makeup award. That standard, Lemmon reminds, lingers in the form of added pressure for today’s filmmakers “because we had big shoes to fill” when they first rebooted the franchise in 2011 with Rise of the Planet of the Apes.

War for the Planet of the Apes (Photo copyright © 2017 20th Century Fox. All Rights Reserved.)

“But that movie wasn’t just a reboot – it was an origin story that told how hyper-intelligent apes developed from common chimpanzees, gorillas, and orangutans,” Lemmon explains. “At that time, our story began with common apes, and so our apes had to look indistinguishable from apes our audience would be familiar with. Apes have much shorter legs and much longer arms [than humans] and obviously they generally don’t wear clothes, so we couldn’t hide anatomical differences under tunics like they did in [the original movies]. Also, our apes move quadrupedally, like real apes do, which is a real challenge for any actor in an ape suit. Most importantly, the apes in our reboot did not yet speak, so much of Rise is a silent film – Caesar speaks four words in the entire film.”

These factors led WETA to develop methods of emphasizing physical and facial performances of CG apes to communicate with audiences. “We adjusted our toolset to fit that particular movie and used performance capture, rather than prosthetic makeup, to achieve the most articulate and realistic apes for the story we were trying to tell,” Lemmon adds.

Fast forwarding to 2017, two movies later, WETA’s ability to continually evolve its apes has grown exponentially. As Lemmon points out, “one of the advantages of digital characters is that you have the ability to improve them.”

Therefore, WETA has again upgraded Caesar and his pals. As a result, for the current movie, Lemmon says “we have changed little details in Caesar’s face to help him better hit some of the intensity and subtlety that we see in [actor] Andy Serkis’s performance [during performance-capture sessions].”

“We’ve rebuilt our fur system so that we can carry literally millions more hair strands on our characters, and style them in ways that are much more realistic than we could achieve before. We also took our ray-tracing engine, Manuka, and added a new physically-based camera and lighting system to it, which allows us to light and ‘photograph’ our digital apes in away that more closely matches the on-set cinematography and is more realistic than ever before.”

MUMMY’S AUGMENTATION

In the 1930’s, Boris Karloff, swathed in bloody bandages, carried the Mummy illusion with his performance. In 1999 and 2001, Arnold Vosloo played the creature in a couple of feature films co-starring Brendan Fraser, and Jet Li gave it a try in 2008. For those shows, CG was introduced, but largely to give the Mummy mystical powers. The current movie is meant to be a more faithful remake of the original 1932 film, but with a female Mummy (Sofia Boutella).

Erik Nash, Visual Effects Supervisor on the project, says film- makers decided early on they wanted a hybrid approach to the appearances of the Mummy and her undead minions – only going full CG when absolutely necessary. The idea was to get as much normal director-actor interaction as possible, using the actor’s performance as the creature’s foundation.

“Our primary and default methodology was to use augmentation,” Nash explains. “With two notable exceptions, the creatures in The Mummy were created by replacing portions of the performer’s anatomy with digitally-created body parts. This approach required phenomenal amounts of character tracking, rotoscoping and roto-animation. But it resulted in creatures that were undeniably physically real, and grounded in their respective environments.

[This way], our director could guide Sophia as he would any other actor, and it gave the other actors a flesh-and- blood character to play against.”

An identical approach was taken with the undead corpses who serve her. “They were reanimated using stunt performers and dancers in aged and tattered wardrobe who were photo- graphed in the scene,” Nash says. “The costumes they wore and the performances they gave would be seen in the finished film. Moving Picture Company’s VFX team would painstakingly replace exposed heads, arms, and sometimes legs with CG extremities that were gruesomely aged, emaciated and decayed.”

Nash feels the best of both worlds was achieved this way, because the end result was characters built on performances of live actors on set, but “they had heads and limbs that were clearly not those of live human beings. Our creature designs took advantage of this approach by featuring damaged and missing tissue, yielding an appearance that could not possibly be achieved through applied prosthetics.”

There were only two exceptions to this approach where full CG was utilized. The first instance was the Mummy’s initial appearance. She would later undergo a progressive transformation, but when first appearing, the character “is in such an extreme state of decay, and her limbs are disjointed,” Nash explains. “So a full CG solution was mandatory. Her extreme condition and its attendant inhuman range of motion demanded bespoke rigging and key-frame animation.”

The second exception was a sequence shot inside a taxicab where undead characters attack the story’s heroes. Nash says filmmakers “preferred not to be constrained by the physical presence of the undead antagonists in such tight quarters.” Therefore, they agreed to shoot the scene without an actor representing the undead characters. “In editing, the most successful performances were [cut together with] CG characters in action,” he adds.

MORE DYNAMIC, ORGANIC XENOMORPH

Visual Effects Supervisor Charley Henley refers to the challenge of revisiting the so-called Xenomorph creature from the Alien franchise for the new film, Alien: Covenant, as “a daunting challenge to satisfy [director] Ridley Scott’s previous success,” meaning the original version of the creature from Scott’s famous 1979 film. Scott’s mission statement this time, Henley says, was to return on the one hand to “the first incarnation of the Xenomorph” as referenced in 1970’s-era creature designs from Swiss artist H.R. Giger, who developed the creature’s original look; and yet, on the other hand, to be able to avoid “the limitations Ridley faced when shooting the original Alien” in terms of how to make the creature’s movements convincing and small details visible to the camera.

“The process was one of trying to run the evolution of the creature in reverse, to get back to the source and then manage, with restrained control, what we could now achieve with current CG technology and techniques,” Henley explains. “During pre-production, we referenced what Ridley liked most from the original Giger drawings, and [MPC’s] art department helped conceptualize ideas for all the creatures. After much debate, it became clear that Ridley wanted the ability to shoot practically where possible, and so Creature Design and Makeup Effects Supervisor Conor O’Sullivan took over the design to prep for the shoot. The [original] Xenomorph design was too tall at eight feet six inches to work as a conventional suit, so the creature team conceived a hybrid approach inspired by Japanese Bunraku puppets.”

![]()

![]()

![]()

![]()

![]()

![]()

![]()

That practical methodology allowed Scott’s team to launch into principal photography using aversion of the Xenomorph to direct and interact with actors even while the visual effects team kept refining the design further in post, preparing a final CG version.

“Based on scans and texture photography of the practical puppet, MPC’s creature department started developing the final CG Xenomorph,” he explains. “The base was as per Giger’s original design, but the surface details and muscles were somewhat more elegant, using references from [wax anatomical sculptures at Italy’s Museo della Specola]. Going CG allowed for a complexity in surface movement using muscle firing systems and the latest in skin shading, while finding the right skin qualities took patient look-development with a special shader written for the creature’s cranium, which had an aspic translucent quality.”

Henley adds the biggest complexity then became making the Xenomorph more “dynamic and physical” and “more organic” than the original. Thus, “developing the physical performance character for it was the hardest thing to wrangle,” he says. “Ridley didn’t want to be limited like he had been in 1978, but it needed to keep its edge. Motion capture took us some of the way, and we also experimented with references of animals and insects, along with key framing.”

GHOST RIDER SETS TV ON FIRE

When ABC/Marvel’s Agents of S.H.I.E.L.D. launched a major story arc this past season featuring a well-known comic-book character that had also had a two-movie theatrical run named the Ghost Rider, they had to illustrate a man’s transformation into

a demon with a flaming skull for a head, and for good measure, his vehicle –a souped-up 1969 Dodge Charger dubbed the “Hell Charger” – flames up along with him.

Visual Effects Supervisor Mark Kolpack headed what was, by television standards, a massive project to bring the Ghost Rider to life in collaboration with various VFX facilities, particularly Sherman Oaks’ FuseFX, the show’s lead vendor. Kolpack spent his seasonal hiatus formulating a plan to make the character viable for the show’s storyline, financial and scheduling needs, and to satisfy longtime fans of the character. He studied original Felipe Smith comic-book drawings of the Ghost Rider, created Photoshop concepts, and analyzed the 2007 and 2011 Ghost Rider films. Eventually, he concluded the primary challenge would be dealing with the character’s inherently “dead face.”

“How can an audience relate to a character that has no skin or eyes?” he wondered. “My quest became to figure out ways that [the audience] could relate emotionally to him.”

On the front end, his team needed to create various elements and lighting to mix-and-match with CG components. He eventually concluded that “LEDs would play an important part” on set. Filmmakers collaborated with his team on a wide range of solutions, including: “a special rig with a spinning, stand-in tire” for the vehicle; various other car elements; a balaclava hood, and corresponding Ghost Rider costume configured with LED strips and tracking markers to create interactive lighting elements; various methods for spreading LED light from the character to other elements on set; a special VFX shoot to capture real fire elements, and so on.

“How can an audience relate to a character that has no skin or eyes? My quest became to figure out ways that [the audience] could relate emotionally to him.”

– Mark Kolpack , Visual Effects Supervisor, Marvel’s Agents of S.H.I.E.L.D.

Kolpack says FuseFX built a special in-house pipeline and labored long on a wide range of details. But regarding solving the aforementioned “dead face,” Kolpack says he got a cue from another Marvel character he had recently seen.

“In Deadpool, I saw a squash-and-stretch technique used on his eyes to help him emote through his mask and thought it was brilliant,” he says. “So I decided to employ it on Ghost Rider. Then we also had the tiny coal eyes burn inside the orbits themselves.”

An important touch came from making the character what Kolpack calls “a motorhead,” meaning bony indentations on the side of the skull essentially acted like exhaust ports and blew out hot flames. Kolpack had the FuseFX team add a burning flameball effect for that look – based on fire images he had shot years earlier using a Vision Research Phantom Flex camera – by doing fluid simulations in Houdini based on those images.

Using a Photogrammetry process, filmmakers also scanned actor Gabriel Luna’s head and body at 3D Scan LA, providing them with geometry and textures for rebuilding various body parts or the interior of his jacket. “The detailed head scan was done for the purpose of his burn reveal into Ghost Rider,” Kolpack elaborates. “We also shot a series of expressions to be used as morph targets so we could match the actor’s performance, and we had simultaneous beauty and polarized texture stills shot after the scanning.”

Kolpack hopes Ghost Rider illustrates what is now possible for broadcast TV and, at the end of the day, “helped toward raising production values overall in [television] visual effects.”