By TREVOR HOGG

Images courtesy of Attila Áfra and Intel, except where noted.

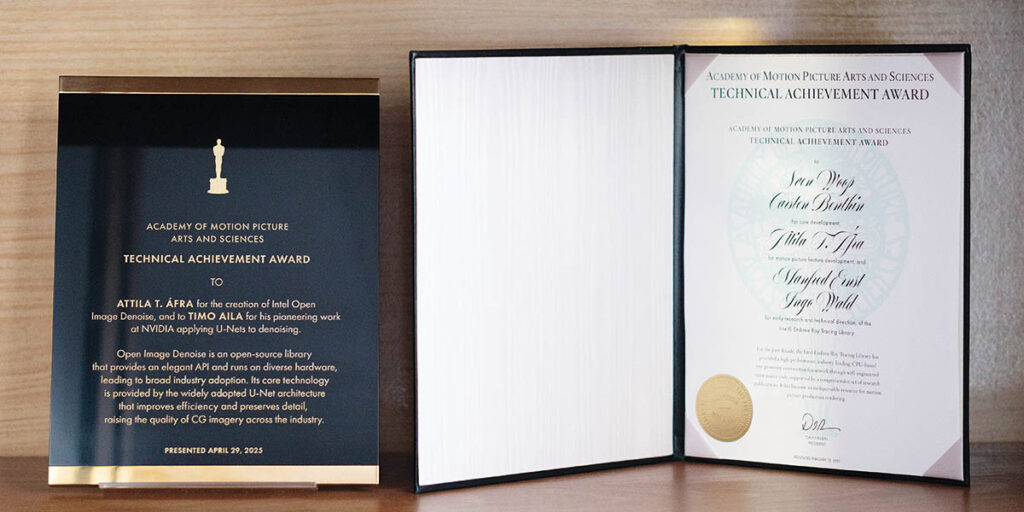

What makes the entertainment industry unique is that artists and technicians have to work together in order to turn a creative idea into something tangible. Making a significant contribution on the technological side of the equation is Attila T. Áfra, who has gone from writing a series of theses on ray tracing while getting a Ph.D in Computer Science at Babeș-Bolyai University to being co-honored with an Academy Award for Technical Achievement for early research and technical direction of the Intel Embree Ray Tracing Library in 2021, and another for pioneering work at NVIDIA applying U-Nets to denoising in 2025.

His fascination with computer programming was fostered at a young age. “My father was already interested in electronics and introduced me to programming when I was in second or third grade,’ recalls Áfra, Principal Engineer at Intel, Open Image Denoise. “I got this Romanian clone of the ZX Spectrum and a programming book from him. We sat down and did a few things together, but after a while he realized that I could do the learning on my own. The technical affinity in the family was always strong, and it was a natural thing to get into programming and computer science because it was a hot topic at that time. All of these new opportunities opened up just a few years after the revolution in Romania. Before that, you could only access mainframe computers at big companies; personal computers were completely unknown. At that time, it was possible for anyone to buy a personal computer and do something with it. My parents thought this could be a promising direction to go. It was an experiment to see whether I would be interested or not, and the experiment was successful!”

Reading PC Magazine as a grade five student led to an epiphany. “PC Magazine came with a CD, and on that were application and game demos,” Áfra states. “The demo scene was something that caught my attention a lot, because these little programs, ranging from few kilobytes or a couple of hundred bytes to megabytes, were all about showing off the skills of these mostly anonymous programmers. It was mind-blowing that it was possible to render some interesting effects in just a couple of hundreds of bytes, and also that was written in assembly code. Regardless of the complexity of the codes, visually, these were more impressive than anything else I’d seen before. There were tutorials in the magazine about how to write some of these little effects; how to render a Mandelbrot fractal in 256 bytes, for example. I started to move more into the graphics direction in programming.”

“There is still a lot of work and research to do with AI methods. The tools haven’t yet been developed to make the gap between real-time and offline smaller and smaller. This is something I’m thinking about nowadays, and it will keep me busy for at least a couple more years from now.”

—Attila T. Áfra, Principal Engineer, Intel, Open Image Denoise

Sparking an interest in ray tracing was the release of Avatar. “Avatar introduced ray tracing in a crude way,” Áfra notes. “This was a joint effort between Wētā FX and NVIDIA, and they were actually rendering some gigantic datasets, which were much bigger than what anyone else had done. Ray tracing was something appealing, because before that, most of these large-scene rendering algorithms were based on rasterization. But ray tracing has some inherent algorithmic advantages compared to rasterization. Avatar was a huge milestone at that time, and that inspired me to go deeper into ray tracing. But even before my Ph.D., I did some ray tracing experiments, mostly ray casting, and I had a little project where I was visualizing in real-time planet-scale atmospheric scattering. I wrote that for a local competition at the university, and technically, that was my first ray tracing or ray casting project.”

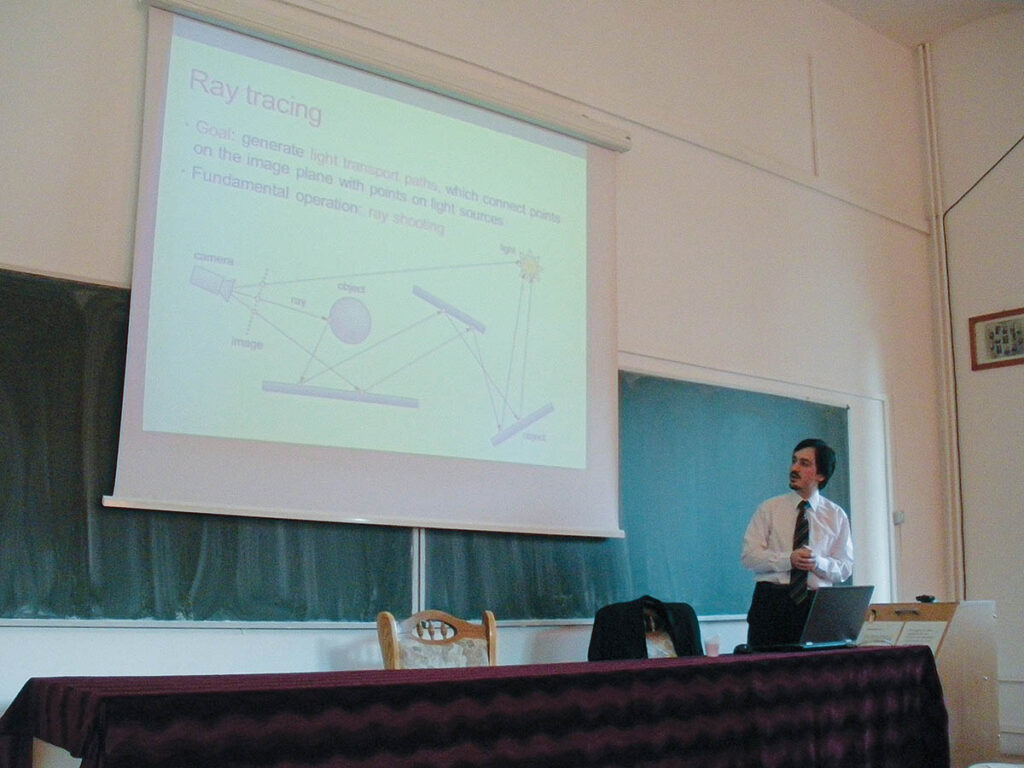

Time was spent as a teaching assistant at Babeș-Bolyai University in Romania. “I haven’t taught that much at universities, mostly during my Ph.D.,” Áfra remarks. “After a while, I felt that it wasn’t satisfying enough for me. It got repetitive because we have a curriculum, and every year you basically need to teach the same thing. However, I learned things during teaching, such as how to explain difficult concepts to people who are not really familiar with that domain; something which can be used outside of teaching.” Áfra also attended the Budapest University of Technology and Economics where he met his most influential mentor. “Professor László Szirmay-Kalos is a legend in computer graphics in Hungary. He was one of the first researchers to introduce computer graphics teaching in universities. He wrote some famous books as well, which helped many Hungarians get started and interested in the domain. Under his lead, I started my Ph.D., mostly regarding ray tracing, and that was the primary focus, which continued later on as well.”

During his Ph.D., Áfra attended multiple conferences leading him to be recruited by Intel Sweden Tech Lead Tomas Akenine-Möller as a rendering intern; subsequently, he was hired full-time as a ray tracing software engineer. “There were so many hacks with rasterization,” Áfra observes. “You couldn’t even do simple reflections that also needed to be hacked. Movies looked so much better than games, and there was obviously a gap between them. One motivation for me was, ‘How far could we push real-time graphics so we could get closer to what the movies can achieve?’ Ray tracing was something that was too far and not achievable at that time, not even for movies and especially not for games. But after all the reading and demos that I saw, I liked the elegance of it. I also did it for the sake of doing it because it was interesting to me. The other thing is that I wanted to do something that could help people create nice images.”

Computer software development is driven more by the needs of the client rather than anticipating what will be needed. “In the case of Intel Open Image Denoise, it wasn’t the first denoise around the market, but it was the first open-source AI denoiser,” Áfra explains. “The fundamental requirements were clear, like, what it should do roughly, what kind of inputs it should expect, what kind of output it should produce, and roughly how it should integrate into a workflow. As soon as we have some kind of basic functionality up and running, then we basically need feedback from clients to say, ‘This is good, but that should be better or faster. The quality here isn’t that good. I need this feature.’ As you move along with the process, you get more people and customers to talk to, and in many cases, everyone wants the same thing. There are some features that are universally required. In other cases, it wasn’t something we thought would be necessary, but customers said, ‘This would be helpful for our workflow.’”

Creating software for a hardware company is not an entirely different dynamic. “The end result is much the same regardless of what kind of company is paying for the project,” Áfra states. “But internally, it’s different, because justifying creating purely software products in a hardware company isn’t trivial. There are some obvious benefits and connections to the hardware. One of the goals of Embree and Open Image Denoise was to make sure that these kinds of rendering tasks would work efficiently on Intel hardware. But these aren’t exclusive for Intel hardware. Initially, both were for targeting only CPUs, but implicitly, that meant that not only Intel CPUs would be supported. Later on, for Open Image Denoise, GPU support was added for many different vendors; that was something that we had to do because of the demands from the customers.”

Hardware and software influence each other. “The advantage of the ability to work on such a project in a hardware company is that we know up front what kind of hardware we’ll be releasing,” Áfra observes. “This means we can make sure that by the time that particular hardware gets released, the absolutely optimized version of the software can already be on the market on day one. The other thing is that there’s also an opportunity to influence the hardware. Since the hardware and software are being developed at the same company, we can work together to ensure that a future version of the hardware could take better advantage of the kind of workload that we are working on.”

The ambition to create nice images led to two Academy Awards. “Embree was recognized because of three reasons,” Áfra states. “Its main goal was to squeeze out every last bit of performance from whatever CPU or hardware you have. The second reason was there weren’t that many libraries, especially for visual effects, that were open source. The third one was the ease of use.” Denoising was the natural next step. “Open Image Denoise got popular because there was no other AI-based denoiser on the market that was open source. Modification, regardless of the nature of it, was important. Performance was another important aspect. Open Image Denoise was not only suitable for offline rendering but also for interactive rendering, which is a critical part of the artistic process. Finally, being able to run on CPUs meant Open Image Denoise could operate anywhere; that sensibility was one of the other reasons it got the award.”

Some of the software has been used in surprising ways. “There were a couple of cases where I was like, ‘Wow. This is amazing,’” Áfra remarks. “For example, in one particular case, even though the project was designed for rendering beautiful images for visual effects or CAD, we learned just by accident that it is being used for thermal modeling for buildings to determine which walls are getting more sunlight and how they should design the interior so that the interiors wouldn’t be too warm.” Computer programming requires abstract thinking. “Many students have a hard time understanding some of the more abstract concepts in programming like, ‘What is a variable? What is a CPU register? How do you fix something in there?’ Some of this thinking comes from math.”

Often, limitations open up other possibilities that otherwise would not be pursued. “Jurassic Park is a classic example of this,” Áfra notes. “Computer graphics was in its infancy at that time, but still, after many years, Jurassic Park is considered amazing. If you looked back at how it was created compared to modern visual effects, it’s absolutely crazy. A lot of that happened because of the limitations they had at the time. Sometimes, if you have too many options or something is too easy to do, especially with generative AI nowadays, you can get lazier.” AI has not altered the workflow. “Even if I’m doing AI for a project I’m working on right now, I still have to do ray tracing for the training dataset. AI is a little bit different because it’s more abstract. I don’t have as much control over a neural network as I do over the traditional code that I write.” Advances in technology inspire further innovation. “When we achieve the next frontier, we will realize that there are further problems to solve and we can push it even further, but what we have right now isn’t sufficient for that. Generative AI is the next step on this ladder.”

Eventually real-time and offline will merge together. “It makes sense from a practical perspective,” Áfra states. “Artists can almost see the final result while they’re actually working on the model or scene. That’s a huge difference between just seeing the wireframe, then start a render, and they will see the result a few days later.” In the short term, there is still a lot of work to do with Open Image Denoise. “One key feature that is missing is temporal denoising; that makes a big difference in terms of the quality and reduces render time significantly. Once that is finished there will still be a ton of work to do because real-time ray tracing is still resource-constrained, and we still cannot do what we can do with visual effects. There is still a lot of work and research to do with AI methods. The tools haven’t yet been developed to make the gap between real-time and offline smaller and smaller. This is something I’m thinking about nowadays, and it will keep me busy for at least a couple more years from now. I have way more work than I can handle lined up in front of me, for sure! Beyond five years, it’s impossible to say. This is such a dynamically changing landscape that I’m sure I will know what’s the next step once I get there.”