By CHRIS McGOWAN

Images courtesy of Amazon MGM Studios.

By CHRIS McGOWAN

Images courtesy of Amazon MGM Studios.

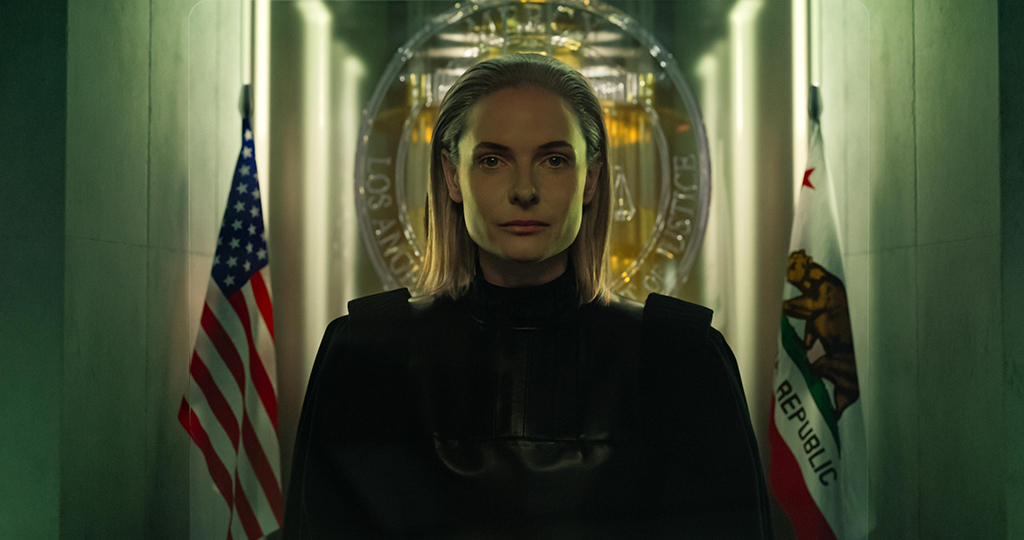

In 2029 Los Angeles, LAPD detective Christopher Raven (Chris Pratt) is accused of murdering his wife and must prove his innocence to an artificial intelligence judge while confined to a chair in Mercy Capital Court. For his defense, AI Judge Maddox (Rebecca Ferguson) grants him access to all available resources, but Raven only has 90 minutes to make his case. If found guilty, he faces immediate execution.

Directed by Timur Bekmambetov, Mercy is an Amazon MGM Studios production that also stars Kali Reis, Annabelle Wallis, Chris Sullivan and Kylie Rogers. For many sequences, the film utilized the LED wall in Stage 15 at the studio’s lot in Culver City, and DNEG supplied the visual effects.

When designing Mercy, the post-production team set out to create a grounded near-future look that teetered on the edge of science fiction. “We’re already living alongside things that would’ve felt like sci-fi not long ago,” Chris Keller, VFX Supervisor at DNEG, says. “AI, VR, algorithms, smart homes. But that also means the audience has a much sharper instinct for what feels plausible versus what feels like hand-wavy future tech. So, you can’t just crank the design up to 11 the way a lot of ’90s sci-fi did. You have to lean into subtlety, restraint and taste, and make sure the technology has a purpose. That was our guiding principle on Mercy – we based everything on existing tech and pushed it just enough to facilitate the leap into suspension of disbelief.”

DNEG VFX Supervisor Simon Maddison adds, “There wasn’t a single thing we did that didn’t have a real-world example to reference. From the performance and design of the quadcopter to the CG homeless community populating the streets, everything started with and referred back to existing examples. It was near future rather than science fiction, which means the audience hopefully feels familiar with what they’re seeing rather than overwhelmed with unfamiliar design.”

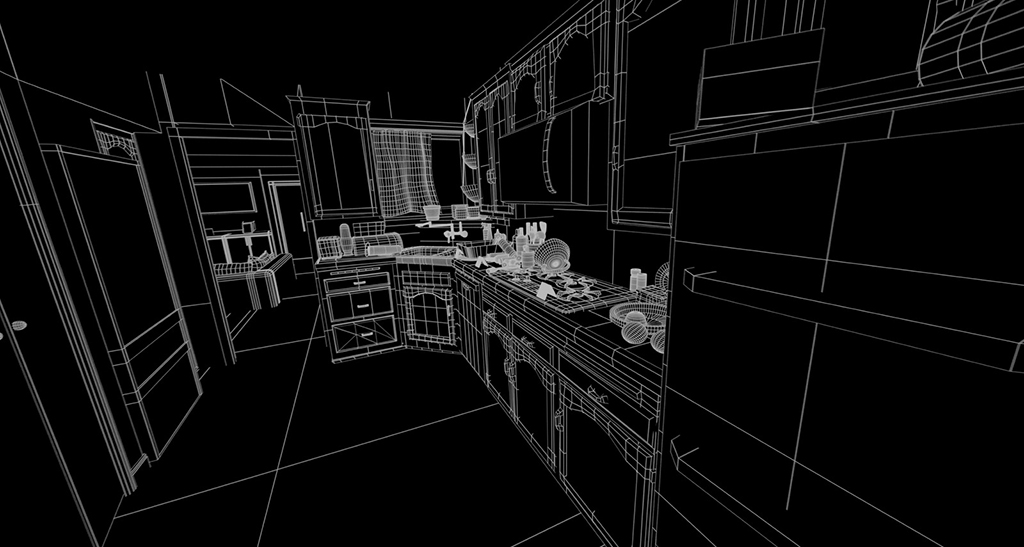

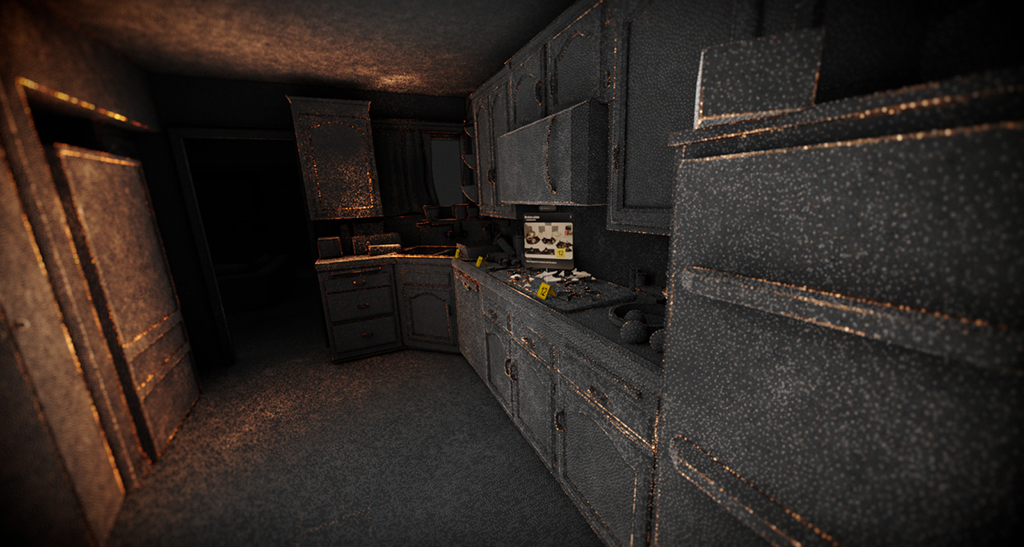

One of the most striking sets was the courtroom, which featured a server room beneath its glass floor, two adjacent labs and a futuristic computer set behind the AI judge. Keller comments, “The courtroom was meant to feel like a believable near-future space the audience could spend 90 minutes in, not a flashy sci-fi set that overpowers the story. We kept recognizable courtroom components in the design, but made it clear that there is technology running the room. The labs, data center and quantum computer were all based on real-world references.”

Lighting the room was a creative challenge. “We had to keep it dark enough for screen readability while still letting the main characters read as the hero subjects,” Keller explains. A lot of the mood was motivated by the courtroom lighting, which included the data center glow under the frosted glass floor, the labs to either side and the lighting around the computer area behind Judge Maddox.”

“A huge advantage was that the courtroom existed on the volume during the shoot in Unreal, wrapped around the set,” Keller notes. “This helped Chris Pratt with eyelines and delivered interactive lighting from the same directions it would come from in our work. Many medium and close shots could lean heavily on the photography, while wider shots often became ‘keep Chris and part of the chair and replace the rest.’”

Keller says, “After principal photography, we ingested the Unreal courtroom asset, then up-res’d and refined it. The fundamentals stayed consistent, but there was room to iterate once we were responding to editorial and final story beats. We kept lookdev running in Unreal for a while for speed, then moved to RenderMan for final rendering once the design and lighting intent were locked.”

“We’re already living alongside things that would’ve felt like sci-fi not long ago. AI, VR, algorithms, smart homes. But that also means the audience has a much sharper instinct for what feels plausible versus what feels like hand-wavy future tech. So, you can’t just crank the design up to 11 the way a lot of ‘90s sci-fi did. You have to lean into subtlety, restraint and taste, and make sure the technology has a purpose. That was our guiding principle on Mercy – we based everything on existing tech and pushed it just enough to facilitate the leap into suspension of disbelief.”

—Chris Keller, DNEG VFX Supervisor, Mercy

The visual effects were extensive in large-scale Los Angeles chase scenes, which included CG traffic, crowds, helicopters and police vehicles. Maddison notes, “There is a sequence in the film that takes place across all of Los Angeles, from the outskirts right into the heart of downtown. It spans a large distance geographically and also a large part of the film, forming the backdrop to the story’s final act. Because of the nature of the visuals in the film, it also needed to be witnessed from multiple points of view, often within the same frame: traffic cameras, Jaq’s dashcam from her quadcopter, a drone following the action from street level and sometimes aerial drone shots. This was achieved using many different approaches.”

Production traditionally shot the journey – camera cars following a real truck through the streets, with a crane and a camera drone flying the same path. Maddison expands, “It was early morning, so there was no other traffic or pedestrians. Most of the destruction seen in the film within these shots was CG, using a library of vehicles we had built and rigged for that purpose. A crowd system was also developed to add reacting pedestrians, sometimes just viewing from the sidelines, but also dodging the speeding truck. The director’s vision also included tent cities scattered about, so all of this needed to be added as well. Once again, this all needed to be built and rigged for destruction.”

Maddison continues, “They shot an array of that same journey using specialized cameras mounted on camera cars for street level and a heavy-lift drone for the aerial shots. This array was stitched and used in a couple of ways. A volume was used for much of Jaq’s POV [Detective Jaq Diallo, played by Kali Reis] and dashcam. Her quadcopter was mounted on a gimbal for realistic movement. There was also a more traditional sim-trav from within the truck. Even if this was replaced, we had a good 360 array of the footage to use and, if nothing else, it provided fantastic lighting and reflections on the actors and their vehicles.

“Sometimes that 360 array was used as a background plate, allowing us to control the 3D vehicles and camera completely in VFX,” Maddison explains. “The shot where Jaq’s quadcopter swings around to face the truck, for example, and the shot where the truck smashes through multiple police cars on the bridge, these were completely generated in 3D, with the background ‘plate’ being created from the stitched array. And finally, a couple of the locations, like the exterior of the municipal building, were generated in 3D, so the fence and the entrance could be crashed through by the truck.”

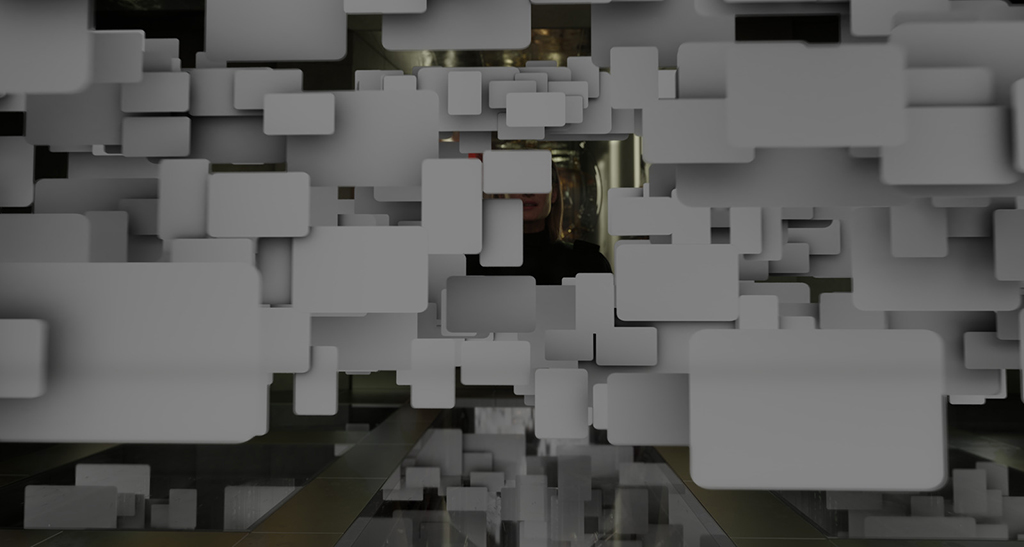

Good references were a key factor in creating a seamless CG experience. Maddison says, “We collected video reference for every single crash and held the animators and FX artists to that reference.” The municipal cloud was an impressive array. Keller says, “Most screens in the film were handled with an advanced 2.5D Nuke setup, utilizing the new USD workflow in Nuke 14, which allowed us to manipulate the screen geometry and make layout changes in comp. But the municipal cloud – the hundreds of screens surrounding Chris when the database first boots up – had to be fully 3D. We loaded the content into Houdini and instanced it procedurally so we could iterate at scale, then did art-direction passes removing duplicates, swapping content, building hero clusters.”

Keller continues, “The brief was that it had to overwhelm Chris and the audience, but it couldn’t feel random. It needed an internal logic that provided visual clarity, so we came up with the following structure: the first layer is always location footage – CCTV, phone, bodycam, which spawns deeper layers of related items, such as reports, records or documents. The result fills the room while still feeling like a real system sorting information. The content volume was enormous. In some wides, you’re looking at over a thousand images and videos at once. Sources were a mix of main shoot material, friends and family submissions, art department elements and stock footage. Because of the scale, we had a dedicated ingest effort focused on importing and managing the content library, and we stayed in constant communication with production and VFX editorial so it remained coherent.”

“There is a sequence in the film that takes place across all of Los Angeles, from the outskirts right into the heart of downtown. It spans a large distance geographically and also a large part of the film, forming the backdrop to the story’s final act… Most of the destruction seen in the film within these shots was CG, using a library of vehicles we had built and rigged for that purpose. A crowd system was also developed to add reacting pedestrians, sometimes just viewing from the sidelines, but also dodging the speeding truck. The director’s vision also included tent cities scattered about, so all of this needed to be added as well. Once again, this all needed to be built and rigged for destruction.”

—Simon Maddison, DNEG VFX Supervisor, Mercy

According to Maddison, there were two types of CG drones in the film, “the quadcopter that Jaq and the police rode, and what we called the ‘logistic drones.’ The quadcopter was achieved in multiple ways, depending on the requirement. Sometimes it was a practical buck attached to a crane arm for shots on location, with the actor performing while sitting on it. In these instances, we painted out the crane, added moving fans and augmented the dust effects on the ground. For other parts of the sequence, the same practical buck was used, but this time on a gimbal arm shot within a volume. For these shots, there was a 360-degree array of the journey it took through the streets of Los Angeles that became the background. In some instances, it was entirely CG, including a digital double of the police riders.”

He adds, “The logistic drones were a collection of fixed-wing delivery drones we designed and used to populate an airborne crowd/traffic system. In some of the wider shots of L.A., you can see a system of them flying around above the streets.”

The environments were a collection of approaches. “From DMP projections of damage onto real buildings to 2.5D matte paintings, all the way to fully CG environments, we needed to create it all. For the finale of the film, for example, the exterior of the municipal building and the surrounding streets were created in 3D,” Maddison notes.

The sheer volume of screen content required exceptional continuity across hundreds of shots. Maddison explains, “The challenge with this was that any single shot could comprise up to five shots of the same event, plus the graphics that also described what was happening. In terms of the journey through the streets of L.A., we needed to line up the same piece of footage we were using as the background or render the same animation from multiple angles. Working on one piece of action in isolation was never really an option. Until we saw it with its partner angles, we never really knew if it was working.”

Maddison states, “There was such a complicated dance between all of these things that it often took some time to get it right. The result is very unique, though, and one that we’re all very proud of.”